A Vision for Public Engagement

Maximize Impact in Raising Awareness of AI Risks

The AI Risk Spectrum

This chart maps the perceived spectrum of AI risk — from those who dismiss it entirely to those who see it as an existential threat.

Every curve and segment boundary on this page is yours to shape. Visit Settings to play with the sliders — adjust the distribution curves, shift where one segment ends and another begins, and craft your own variation of this story.

AI risk is essentially zero — technology is neutral or inherently beneficial.

Risks exist but are manageable through existing institutions and market forces.

Real risks need attention, but careful governance can keep things on track.

Worried about risks already materializing — surveillance, job displacement, algorithmic bias, misinformation.

Worried about emerging and escalating threats — bioterrorism, dystopian control, power oligarchy, automated warfare.

AI poses a genuine existential threat — current trajectory leads to catastrophe or human extinction.

Who Thinks What?

Scroll through each segment of the spectrum to understand the perspective behind the position.

The Dismissive

This group sees AI risk as essentially zero. Technology, they argue, is neutral — or even inherently beneficial. Historical precedent shows that every major technology was met with unfounded fear. AI is no different.

The Unconcerned

They acknowledge risks exist, but believe existing institutions — markets, governments, courts — are well-equipped to handle them. Innovation should not be stifled by speculative fears.

The Cautiously Optimistic

Real risks demand real attention, but careful governance can keep things on track. This is the most common position: optimistic about AI's potential while insisting on guardrails, standards, and oversight.

Concerned About Current Risks

Worried about risks already materializing today — pervasive surveillance, mass job displacement, algorithmic bias amplifying inequality, and AI-generated misinformation eroding trust in shared reality.

Concerned About Future Risks

Looking further ahead: AI-enabled bioterrorism, dystopian social control, power consolidating into an AI oligarchy, and autonomous weapons making war frictionless and perpetual.

The Alarmed

AI poses a genuine existential threat. The current trajectory — racing toward superintelligence without adequate safety — leads to catastrophe. Some in this group give humanity less than even odds of surviving the century.

The Conversion Funnel

The same population, reshaped — from widespread dismissal at the top to a small core of alarmed voices at the bottom.

Lethal Intelligence

Lethal Intelligence sits at the very top of the conversion funnel, targeting the general public. It doesn't assume people are already on board with the premise — instead, it aims to lure them in.

Through memes, short videos, animated explainers, long-form educational content, clips from luminaries, and all manner of visually engaging, viral content, it sparks curiosity and interest — guiding people through the journey from casual dismissal to genuine concern.

It operates across every layer of awareness: from those who haven't thought about AI risk at all, to those already leaning cautious. The goal is to move them down the funnel, one step at a time.

In essence, Lethal Intelligence is a router — it onboards, enlists, and activates people who are ready to act. Once activated, they're handed off to Humans First to channel that energy into action.

Goal 1: Change the Shape of the Curve

Shift public perception so more people recognise the severity of AI risk — moving the distribution curve from complacency toward concern.

AI risk is essentially zero — technology is neutral or inherently beneficial.

Risks exist but are manageable through existing institutions and market forces.

Real risks need attention, but careful governance can keep things on track.

Worried about risks already materializing — surveillance, job displacement, algorithmic bias, misinformation.

Worried about emerging and escalating threats — bioterrorism, dystopian control, power oligarchy, automated warfare.

AI poses a genuine existential threat — current trajectory leads to catastrophe or human extinction.

Goal 2: Risk Awareness Force

Grow a community of passionate supporters.

Every concerned citizen who reaches the end of the conversion funnel becomes a crusader that starts a chain reaction.

Goal 2: Risk Awareness Force

Self-feeding hypergrowth feedback loop

Make AI risk a dinner-table conversation — critical mass creates unstoppable momentum.

Every advocate becomes a missionary — each conversation plants a seed, turning bystanders into crusaders.

Preachers of awareness drive media coverage, policy discussion, and public demand for AI safety.

Why Does Grassroots Change Matter?

Politicians lead from behind. They don't shape public opinion — they follow it. Without massive demand from the general public, the decision-makers we need simply won't exist.

Without public demand, the leaders we need won't exist.

This is why grassroots change matters — and why Lethal Intelligence works to build the awareness that creates the demand.

Perceptions Disconnect

Comparing perspectives: the same distribution looks different through different lenses.

TPOT (this part of Twitter)

A few thousand smart people spend their days in a filter-bubble, preaching to the choir about how powerful AI is, how disruptive this technology will be, how it will reshape everything. We assume this is just general knowledge — that everyone sees what's coming.

In reality, the vast majority of people have no idea. They know something is happening with AI, but they think it's a glorified search engine and a fun trick for generating fake videos.

They don't realize it's about to completely disrupt all human value, human labor, and human decision-making. (Exposure to Reddit or just touching grass and speaking to “normies” makes this very obvious — we basically live in a different reality.)

AI Safety Community

If TPOT lives in a bubble, the AI safety community lives in a bunker. Most volunteers at AI Safety organizations live and breath AI Risk. They've read the papers, they've run the scenarios, and they're genuinely terrified.

The problem? To the average person on the street, they look like conspiracy theorists. Tinfoil-hat types warning about a robot apocalypse. The disconnect between how safety advocates see themselves — as informed, rational people sounding a necessary alarm — and how the public sees them is staggering.

Most people don't even know these movements exist. And when they do encounter the message, the framing feels so alien to their everyday experience that it gets dismissed outright. This isn't a knowledge problem — it's a communication chasm.

Strategies

Organic Growth

The gift that keeps on giving. Every person who truly grasps the risk becomes a broadcaster in their own right — reaching audiences no ad budget ever could.

Cross-Pollinate Distribution

Maximize every piece of content that has already proven it can go viral. A story that blew up on r/openai gets posted to r/anthropic. A viral X thread becomes an Instagram carousel for a creator with a matching audience. Proven content, new eyeballs.

Build In-House Muscle

Don’t outsource the movement. Develop internal capabilities — content creators, community managers, data analysts — so the mission stays authentic and self-sustaining.

The Engagement Trade-Off

Why easy-to-share content and real-world impact pull in opposite directions — and what it means for strategy.

Reach peaks at low X-risk. Content about tech billionaires, job loss, or AI bias goes viral easily.

Reach drops off at high X-risk. Existential risk sounds like sci-fi — abstract, not relatable.

Low impact at low X-risk. Nobody takes to the streets just because they dislike Zuckerberg.

Impact per person rises exponentially toward high X-risk. Those who grasp it will move heaven and earth.

Total Impact = Reach × Impact per Person. Each unit of attention invested in high x-risk content delivers exponentially more real-world change than easy viral content.

This is why we cannot ignore the high X-risk side of the spectrum.

Not because it’s easy

but because each person who responds to this content becomes a force multiplier that no amount of viral content can match.

The Strategy: Meet People Where They Are

The best approach is to strategically target different audiences with content at different levels of X-risk seriousness and academic rigor. When infiltrating communities online — for example broadcasting across thousands of subreddit communities — people who are unaware of the risks are not ready to be hit head-on with existential horrors.

They respond more to humour, playful memes, tech-oligarch clips, and environmental impact. These are the entry points.

Once they become members of AIDangers and other Lethal Intelligence platforms, they get increasingly exposed to the real darkness — guided through the journey of becoming truly alarmed. And then most of the work is done, because they will do anything in their power to have an impact themselves: join the AI Risk Awareness Force, commit volunteering work to groups like Human First, and become advocates in their own right.

The Distribution Engine

A scientific, data-driven infrastructure that applies gradient-descent-like optimization dynamics to content distribution — a recursively improving feedback loop that increases throughput, resonance, and virality every single day

Cross Platform

Soon to be applied to X, Instagram, TikTok & all major platforms

Started with Reddit:

views per month

views per week

views per day

Resonance Intelligence

Statistically significant signal about what AI safety content resonates with completely diverse communities.

Content Resonance

Track how AI safety content performs across communities with completely different interests and perspectives.

Growth Clusters

Community-content networks that capture which content performs where. The connections grow stronger every day — yielding increasingly measurable signal as we use it.

Cross-Community Analytics

Powerful analytics cutting across different dimensions of engagement, reach, and sentiment.

Take viral content that has proven performance — either on other platforms or in other communities — create variations, and distribute to the targeted audiences where we know it will perform best. The cluster intelligence allows someone completely unfamiliar to use these connections the same way an expert would — because the knowledge is now in the system, not just in someone’s head.

The Production Pipeline

A workflow that is completely safe, organic, and will never be seen as spam.

No hard ceiling — output scales just by adding more resource units to the production line. Like a factory of attention units, each building block can be copied and extended to multiply views and reach.

Content Intelligence

Identify high-performing content across platforms and communities

Variation Creation

Create fresh variations using resonance intelligence

Queue System

Precise recommendations — pick from the queue, get exact instructions on where to post

Targeted Distribution

Surgically targeted delivery to communities where it will resonate most

Creators

Taste & content craft

Distributors

System-guided placement

• Roles are separated — lower risk, no personal exposure, fully organic

r/AIDangers

Our own platform. Zero to 30,000 members in six months.

Roughly ~2,700 posts of serious video clips sourced from AI-safety interviews and documentaries, plus news and article links — and roughly ~2,000 posts of mainly memetic content like original memes and less serious video clips, aimed at younger audiences receptive to memes and viral content.

Once the workflow, team, and distribution pipeline are fully in place, growth should become exponential.

Own the Platform

A platform we own and control. Broadcast any content we deem important without dependency on other community owners.

Full Control / No Risk

No risk of being taken down or banned by other community owners. Permanent infrastructure for the movement.

Built over the past year without any distribution system, growth strategy or infrastructure.

~12K

followers

~4,000

posts — mostly original content

1 year

of growth, trying different approaches — no concrete system used

*(stopped working on it around October — focus switched to Reddit)

Soon to be integrated into the Distribution Pipeline

X is about to become part of the full distribution workflow. With the same resonance intelligence and targeted distribution that powers Reddit, growth is expected to accelerate many times over.

The newest addition to our platform presence.

5k followers

Grown from zero in just two months — experimenting with roughly 85 reels which performed well, cross-posted from our other platforms.

*(put on hold in January — focus switched to Reddit)

Distribution Pipeline Integration Coming Soon

Once Instagram becomes part of the distribution pipeline and workflow, the same systematic approach is expected to accelerate growth significantly — just as it has with Reddit.

The Rest of the Major Platforms

Placeholder accounts established across every major platform — ready to be activated.

@lethal-intelligence

162K subscribers

TikTok

1.1K followers

All platforms will be brought into the fold, riding the wave of hyper-growth throughout the upcoming months. As each platform joins the distribution pipeline, the compounding effect across all channels creates an unstoppable cross-platform presence.

*Broadcasting to all these platforms is currently on hold until we get more resources

The Hub

The one-stop destination for everything

AI Risk Awareness related.

Curated content from across the entire internet — organized, searchable, and ready to consume or share.

Filling a Massive Gap

There are great academic resources, long-form blogs, and scattered social media posts — but nothing that ties it all together into one accessible experience.

No Place to Just… Read

Want to casually consume AI safety content the way you’d read The Economist or your favorite magazine? Right now, that doesn’t exist.

The best content is scattered across social media feeds, buried in long rationalist blogs, locked behind academic jargon, or lost the moment you scroll past it. Videos from luminaries? Search a dozen platforms. Today’s best memes? Dig through Reddit noise. A great X post you saw last week? Gone in your feed forever.

There’s no immersive, magazine-like experience for AI safety — just chaos. Until now.

No Place to Find & Share

Already alarmed about AI risk and want to spread the word? Finding the perfect meme, the most powerful video clip, or the clearest explainer means maintaining your own scattered system of bookmarks across a hundred different sources.

There’s no single place where an advocate can go to find the best curated resources — ready to share with one click. No arsenal of content organized by type, by topic, by impact.

The Hub is that arsenal. The best memes, videos, explainers, and posts from across the entire internet — curated, organized, and always at your fingertips.

One destination. Everything that matters.

A magazine-like experience for AI safety — not the chaos of social media.

The Magazine

Curated AI safety content. Read it like the morning paper, not a Twitter doomscroll.

Luminary Clips

Hinton. Bengio. Hendrycks. Russell. Organized video clips from the minds that matter most.

Meme Archive

The internet's best AI safety memes. Searchable. Shareable. Weaponized humor.

Microblog

The best of X/Twitter, republished. No account needed. Links back to originals.

Open Letters

Every major open letter on AI risk. One place. Full archive.

Searchable Archive

Months of content, organized. Not just what’s trending — everything that matters.

Discovery

Find key voices you’d never stumble on. Advocates, channels, academics, whistleblowers.

Action Paths

Ready to act? We connect you to Humans First, CTRL AI, PauseAI, and the organizations making change.

For Everyone

Not on X? Don’t want to be? The hub brings the best content to you, wherever you are.

The Conversion Journey

How casual curiosity becomes unstoppable conviction.

Infiltration

We infiltrate communities that have nothing to do with AI risk. Pizza lovers, gaming communities, meme pages — it doesn’t matter. We seed AI memes and content that sparks curiosity. A laugh, a shock, a “wait, really?” moment. Into the funnel they go.

Immersion

Once they land in The Hub, they get immersed. They subscribe to our socials and the newsletter. Our memes keep hitting their feed. They keep coming back to The Hub — and they keep getting more and more of its content.

Evidence

They explore deeper. They see more and more evidence of things they start feeling might be a real problem. Perspectives they haven’t thought about before. Each visit plants another seed. Deeper in the conversion they go.

Conversion

The more time they spend in The Hub, the more they move on the X-Risk index. This is where casual curiosity quietly transforms into genuine understanding — not because an expert told them to worry, but because it makes sense to them.

Activation

They join the AI Risk Awareness Force — spreading memes and social content to their own circle of influence. They join Humans First to put their energy into action. Once they exit the funnel, they become the funnel for others.

The Key

Without The Hub, they’d see one video or meme and forget about it the next moment. But with deep, self-driven immersion — arriving at conclusions on their own because it makes sense, not because someone told them — their motivation becomes unstoppable. There is nothing stopping them.

AI Risk Awareness Force

New Member

What’s Next

The Hub Is Being Re-engineered

From a basic WordPress site to a self-sustaining content ecosystem — deeply integrated with the Distribution Engine infrastructure — becomes a real-time multi-platform media presence (Digital Magazine, Mobile Apps) that brings AI safety content to the masses, making it a mainstream intellectual pursuit.

The Technical Leap

The current Hub is admittedly outdated — a manual WordPress site built on yesterday’s tech stack. It’s being re-engineered from the ground up, transitioning from a manual repository into a self-sustaining content ecosystem — unlocking frictionless publishing directly from the Creator Rooms of the Distribution Engine, as part of the workflow. Real-time journalism.

The Automated Pipeline

The Hub interfaces directly with the Distribution Engine’s API. As the Engine identifies high-resonance “Alpha” content — viral clips that break through — that content is automatically funneled, formatted, and queued for publication.

Not Just Reposted — Refined

The creators working on the Distribution Engine allocate part of their bandwidth to The Hub. They don’t just post — they ensure AI-discovered viral clips are contextualized with high-quality writing, proper sourcing, and magazine-ready headlines.

The Hub thrives on the best results from the Distribution Engine, turning raw viral content into polished, permanent resources.

The “Lean-Back” Experience

We aren’t just building a website. We’re building a media presence that follows you from your desk to your bed.

Mobile Apps

Dedicated iOS and Android apps with push notifications, offline reading, and a premium feel that a mobile browser can’t replicate.

Your daily dose of AI risk awareness — always in your pocket.

Digital Magazine

High-design, magazine-style layouts optimized for iPads and tablets. A weekly publication that turns AI safety content into a deep-dive reading experience.

Capturing the bedtime slot — 30–60 minutes of deep reading instead of 15 seconds of scrolling.

Lasting Value

The Hub crystallizes and adds lasting value to everything the Distribution Engine does.

Content that gets buried fast in social media feeds stays fresh and “alive” here — always accessible, structured, organized, and up to date, thanks to the automated content pipeline.

The goal: a professional, polished, and technically superior destination that makes AI safety content exciting to consume.

The “Digital Newspaper of AI Risk Awareness.”

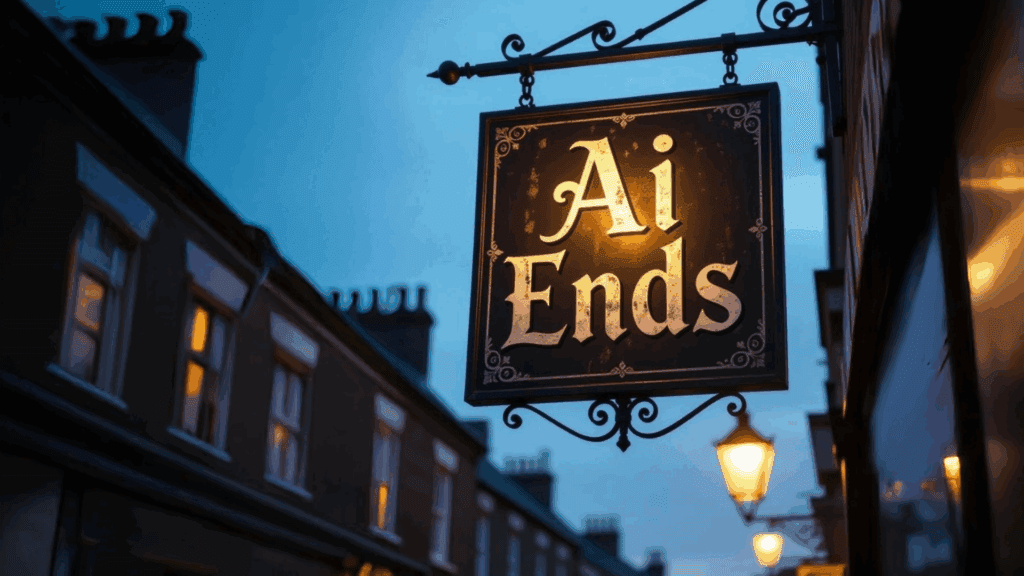

AI Ends Pub

Where the AI Risk Awareness Force finds its people.

Join the debates, currently on Discord.

The lowest-effort, highest-impact thing you can do.

The Debates

A community on a mission to foster vibrant discussions and clash worldviews between those who worry about AI and those who don’t — in a productive dialog, from which we will all come out wiser.

The Belonging

A place to find belonging, amplify your voice, and connect with friends who want to spread AI risk awareness just like you.

A community that is NOT about volunteering or unpaid work.

It’s not about assigning tasks or extracting value from you.

It’s a community that delivers value to you.

Giving you all the resources you need to be as effective an AI risk awareness crusader as you can.

We don’t tell you how to do it — we just help you spread the message to your own circle of influence, in your own way.

What awaits you inside

Find inspiration. Draw strength. Build confidence. Get equipped.

Fireside Discussions

Warm virtual conversations about the questions that matter most. Think deeply. Speak freely.

Online Pub Nights

Relaxed gatherings where ideas flow freely. No agenda — just good company and great conversation.

Festivals & Events

Colorful celebrations that unite the community. Creative, vibrant, and full of energy.

Debate Practice

Sharpen your thinking and challenge assumptions. Come out wiser, ready to engage anyone.

Movie & Screening Nights

Watch together, discuss what you’ve seen. Films and docs that shift perspectives.

Game Nights

Fun-filled evenings that build lasting bonds. Because community starts with connection.

Color-coding

Upon joining, you’ll be asked to share your stance on AI risk. Based on your response, you’ll receive a unique colour badge, instantly signalling your perspective to others during conversations.

RED 🔥 If you are an AINotKillEveryoneist (You worry about upcoming AI)

Green 😏 If you are an AI-Risk denier (You believe it will all be fine)

You can update your stance anytime via “Channels & Roles” in the sidebar.

Tables

There is a table (text-channel) for each documented common skepticism, organised in categories. If you don’t believe in AI risk, chances are the deep reason can be found in one or more of those tables.

Share your thoughts there, and the other side will respond and provide their rebuttal to your argument.

Each category also has its own voice channel, so there can be live discussions in parallel debating the arguments.

The “AI Ends” name

The name draws inspiration from London’s famous World’s End pub.

AI will mark the end of many things — the hope is it ends disease, suffering, and poverty. It will also end society in its current form, potentially ushering in the end of drudgery and scarcity.

But it will also signal the end of a long era where humans reigned as the apex intelligence. And as it heralds the close of an age, some fear it could literally lead to the end of the world — which ties back neatly to the World’s End pub.

The AI Ends pub is a safe place where we can gather to discuss AI — by far the most interesting phenomenon of our time.

The AI Risk Awareness Force

Every advocate starts as a visitor.

You walk in curious. You sit down at the table. You listen, you question, you debate — and somewhere along the way, something clicks.

You don’t need to be an expert. You just need to care enough to share the message in your own way, within your own sphere of influence.

The pub doesn’t create crusaders through assignments or obligations. It creates them through belonging, confidence, and the simple realization that your voice matters.

Pull up a chair.

AI Ends Pub is where the AI Risk Awareness Force finds its people. All the action paths are designed for the community — on their own terms.

Every Dismissal Has an Answer

100+ targeted video rebuttal collections / playlists.

Created by thought leaders, AI experts, and AI safety advocates.

Deployed everywhere.

Each collection is dynamic. The audience decides, surfaces the best Takedowns for each skepticism to the top.

The end of plausible denial.

+ infinite organic growth glitch.

Targeted Skepticisms

Every single person has their very own unique sticking point—one specific reason they believe everything will turn out fine. Because they think they’ve figured it out, every time they’re exposed to content about AI risk, they simply tune out. They don’t really listen, because deep down they feel they already know better.

I have documented 100+ of these denial arguments and skepticisms.

What works for one person doesn’t work for another. They have a different sticking point. After years of extensive debate, I’ve found that everyone gives a different reason why AI risk isn’t real.

For someone to become truly alarmed, they have to process every single argument—and find that none of them holds up. Only then do they understand: there is no silver bullet, and the risk is very real.

100+ Denial Arguments

Each box = one skepticism, colored by category.

No Consciousness

“Artificial General Intelligence (AGI) isn’t arriving anytime soon due to AI’s lack of consciousness. True general intelligence requires subjective awareness, self-reflection, or “inner experience” akin to human consciousness.”

The Ultimate Organic Growth Engine

The ultimate organic growth engine for AI safety awareness.

Imagine a library of highly targeted playlists—each one a precision-crafted collection of expert rebuttals addressing one specific AI risk denial argument.

Now imagine our promoters in every comment thread on Reddit, X, YouTube, Bluesky—responding to dismissive comments with a laser-targeted link to the exact playlist that addresses that specific argument.

Not spam

The link is spot-on. It’s pure value for every reader.

Organic inbound

The purest form of organic traffic. Growth without limit.

The AI Thread Scanner

The highest-leverage component of the entire system. Instead of waiting for promoters to find threads manually, AI does the hunting.

Scan

AI continuously monitors threads across Reddit, X, YouTube, Bluesky, and more

Analyze

Identifies dismissive comments and maps them to specific denial arguments

Match

Pairs each thread with the most relevant takedown rebuttal playlist

Queue

Pre-matched threads appear in each broadcaster's inbound queue, ready to deploy

The Distribution Engine already has Creator Rooms where broadcasters manage and distribute content. Now imagine a new AI-curated inbound queue that automatically surfaces live threads where someone just expressed a dismissive AI risk argument.

Each thread arrives pre-matched with the exact rebuttal playlist that addresses that specific argument. The broadcaster simply reviews, clicks, and deploys—responding with a perfectly targeted link that brings the entire thread’s audience back as organic inbound traffic.

Scale

AI scans thousands of threads per day across every platform. No thread goes unnoticed.

Precision

Every match is surgical. The right playlist for the right argument, every time.

Effortless

Broadcasters don’t hunt for threads. Threads find them—pre-loaded with the perfect response.

This is the force multiplier that turns a grassroots movement into an unstoppable engine.

Every dismissive comment on the internet becomes a conversion opportunity. AI finds them. Broadcasters convert them. The movement grows exponentially.

And the best part? This organic engine scales effortlessly. Every new promoter who joins the movement multiplies our reach—distributing these hyper-relevant, perfectly matched links into conversations that are already happening.

More promoters = more high-relevancy links deployed = more organic traffic = more conversions. No ad spend. No algorithms to fight. Just the right rebuttal, in the right thread, at the right time.

Short Stories Featuring Personas

Each story shows how targeted takedowns convert skeptics into advocates.

About the characters

All names below are fictional and chosen at random. Think of them as roles, not specific people.

Paul

Emily’s husband

Emily introduces the AI Takedowns page. They navigate to argument #10. Dan Hendrycks’ response plays first—sharp, concise, 5 minutes. Then Max Tegmark offers a different angle. The playlist continues with more luminaries, all targeting this SPECIFIC sticking point.

Fully converted. Joins the movement.

Peter

Paul’s colleague

Paul shares playlist #24. Peter watches each luminary give a 5-minute analysis. He counters with another argument—Paul responds with playlist #43. Nobel laureates and top scientists make their case.

Becomes a movement leader.

Organizations

Inter-org coordination

Contributing to this library is one of the lowest-effort, highest-impact things any organization can do. Michael conducts podcast-style sessions with each participant. Arguments are shared in advance. Recordings are cut into clips targeting each specific argument.

Unity across the AI safety ecosystem.

The Movement

Humans First deployment

100+ short videos addressing very specific denial arguments, released across all platforms—Reddit, X, Threads, Bluesky, YouTube. Any dismissive comment receives a tailored-made response: a link to a playlist of rebuttals by thought-leaders, addressing specifically that comment.

The most powerful tool in our arsenal.

Elon Musk

Industry leader

Agrees with 100 of 101 Takedowns. Advocates for all of them—except #62, where he provides the optimistic “steelman.” His millions of admirers discover AI Takedowns exists and get exposed to the best arguments by the best minds in the field.

Millions onboarded in droves.

Emmanuel Macron

World leader

Like Musk, agrees with 100 Takedowns but has a sticking point at #71. His global audience—fans and critics alike—now knows AI Takedowns exists, and that the Humans First movement is THE PATH to action.

The Overton window is wide open.

YouTube & Long-Form Content

It all started with the Lethal Intelligence Guide. The best crash course to the lethal dangers of upcoming Autonomous and General Artificial Intelligence systems (AGI).

Part 1: A comprehensive and nuanced dissection of the problem — how the race to AGI risks wiping out all value, present and future.

Part 2: The complete picture — includes a takeover scenario and all the remaining dimensions of the problem.

Cult Following

Not a week goes by without a thank-you from a stranger saying it needs to be seen by millions

Raving Reviews

The full AI safety argument explained visually — accessible, engaging, and comprehensive

Pure Passion Project

Produced entirely by just two people working part-time with extremely constrained resources

Animated Content & What’s Next

Every frame hand-crafted without AI — an enormous effort under extremely constrained resources.

Hand-Crafted, No AI

The Lethal Intelligence films were produced entirely without AI-generated content. At this level of quality, with just two people working part-time, production took an extraordinarily long time. Every animation, every visual — painstakingly crafted by hand.

AI Tools Will Change Everything

With rapid advances in AI-generated video, we can now produce animated content dramatically faster. There’s a deep backlog of exciting scripts just itching to get out there — powerful stories ready to be picked up and produced. It’s now a matter of bandwidth and resources to unlock them.

The X-Risk Content Paradox

The deeper you go on the X-Risk spectrum, the harder it is for people to engage with the arguments.

Heavy educational content doesn’t spread like entertainment

Even presented in the most visually engaging, accessible way possible, a deep explanation of the Yudkowskian model is not something the un-worried will naturally seek out. The concepts are complex. The stakes are abstract. Entertainment will always have easier reach.

But this is exactly why the distribution pipeline matters

If the content can’t find its audience through entertainment algorithms alone, you build the system that puts it in front of the right people. The social media distribution engine bridges the gap between deep, important content and the audiences that need it most.

Podcasting & Short-Form Content

Expanding the format range — from weekly shows to daily micro-content.

Warning Shots — active now (31 episodes as of March 1st)

A weekly podcast with two other sizable creators (Liron Shapira from Doom Debates and John Sherman from ARN), building a growing audience.

Discussions about potential collaborations with many others are in the works — Liam Elkins from Siliconversations, Dan Faggella from Trajectory, Michaël Trazzi from Inside View, Liv Boeree from Win-Win and more.

Events Horizon

A short daily analysis from curated Twitter threads — agile, authentic, influencer-style commentary. No studio feel, just real reactions to what’s happening every day. The raw material never runs out.

AI Takedowns

100+ targeted video rebuttals — short, expert-led responses to every specific AI risk denial argument. Deployed as playlists across all platforms, each one precision-matched to a skeptic’s sticking point. The most powerful conversion tool in our arsenal.

More Podcasting at Higher Frequency

Once bandwidth frees up from building the team and platform workflows, podcasting scales up significantly. Multiple formats planned, with short content that performs well on TikTok and other platforms.

Building the Content Engine

The most important investment isn’t paying for content — it’s building the capacity to create it.

Paying for content = expense

Building content capacity = investment

Outsourced content is a one-time transaction. In-house content creation muscle is the goose that lays golden eggs — a gift that keeps on giving.

AI Video Tools Change Everything

The latest AI video generation tools make it possible to execute creative vision at a fraction of the previous cost. A backlog of animated scripts is ready to produce — real magic waiting to be unleashed.

The Bottleneck: Low-Cost Production Resources

The creativity and scripts exist. The ideas are there. What’s needed is basic, low-cost resources for video editing and production to increase throughput. Once the workflows and team are in place, the focus shifts back to creating high-quality original content at scale.

Wild Experiments

AI-generated content unlocks extremely viral potential — millions of views, millions of followers. But these experiments need several degrees of freedom to go as wild as they need.

Decoupled from Main Brands

These experiments should not be common knowledge as linked to our main assets. They need freedom to be bold, provocative, and unapologetically viral.

Grow Experimental Platforms

Build massive audiences through entertainment first. When the time is right, leverage those platforms to promote AI safety content to people who already trust you.

Infiltrate Through Entertainment

It’s much easier to reach new communities with viral fan content than by beating the doomer drum all day. No one wants to listen about sad or scary stuff — but everyone watches wild content.

No Plot Armor

What if the heroes just… died?

Popular culture scenes reimagined without plot armor. The Terminator dies in scene 1. The Smurfs get caught immediately. Iconic moments replayed with realistic outcomes — AI-generated, brutally honest, darkly hilarious.

AI Safety Angle

There is no plot armor for AI safety. Get it wrong and there is no epic battle, no last-minute save — just dystopia or worse. The entertainment makes the message unforgettable.

2030

Five years from now. A family. UBI. Robots.

A family living with Universal Basic Income in the near future — Black Mirror style. Robots like Tesla Optimus handle daily life. Everything looks fine on the surface. Nothing is fine underneath.

AI Safety Angle

The normalization of AI-run society makes complacency the default. By the time people notice the loss of agency, it’s already too late.

Reality is Broken

You just watched a lie. And you believed it.

Popular viral videos — fails, memes, heartwarming moments — that towards the end reveal themselves as entirely AI-generated. You cannot trust what you see anymore. Subliminal AI safety messages woven throughout.

AI Safety Angle

If you cannot distinguish reality from AI-generated content, how will society make informed decisions about AI governance? The medium IS the message.

Dystopian Intelligence

Full movies of dark futures. Millions are watching.

The dark version of the future, visualized with AI-generated video. Full movies depicting futures no one wants to live in. Some channels already doing this are pulling millions of views — there’s still a huge gap to fill.

AI Safety Angle

When millions consume vivid AI-rendered dystopias, the abstract threat becomes visceral. Entertainment creates the emotional foundation that rational arguments alone cannot.

Grow First. Convert Later.

Build platforms of millions through entertainment. When the time is right, introduce AI safety content to an audience that already trusts you.

No Plot Armor

2030

Reality is Broken

Dystopian Intelligence

…

…

…

…

Many more ideas in the works.

High-Leverage Strategy

Influencer Deals

Once the infrastructure is in place — or even in parallel — we pursue an additional high-leverage strategy: targeting specific niche communities with content that speaks directly to their reality.

The Playbook

Identify a niche community — drivers, teachers, software engineers, nurses, artists, factory workers — any group whose livelihood is directly threatened by AI.

Find popular accounts with large followings in that community — pages, groups, influencers who already have the audience’s trust.

Create targeted content — videos, posts, articles — showing exactly how AI disrupts that specific sector. Real data, real consequences.

Pay those accounts to post and distribute our content. The content includes links back to the hub and our assets — driving organic inbound traffic from audiences who are personally affected.

Example Niches

An endless stream of communities to target — each one a new funnel into AI risk awareness.

Transportation & Drivers

Taxi drivers · Bus drivers · Truck drivers · …

Content

We create a series of posts and short videos showing the rapid progress of autonomous vehicles, the timeline to full automation, and what it means for everyone in the sector. Real data, real timelines, real consequences.

Education & Schools

Parents · Teachers · School counselors · …

Content

Content that speaks to parents and educators about the crisis of career planning in the age of AI. What do you tell a child studying for a profession that may not exist by the time they graduate?

Software Engineering

Software developers · Bootcamp graduates · CS students · …

Content

Posts and videos showing AI coding capabilities, the shrinking demand for traditional programming skills, and what this means for millions of developers who built their careers on technical expertise.

An Endless Stream of Opportunities

Drivers, teachers, software engineers — these are just the beginning. Every profession, every community touched by AI disruption is a potential channel.

With dedicated resources, we can consistently explore new opportunities, create targeted content, build relationships with niche accounts, and drive organic inbound traffic — every single day.

Scout

Identify niches & find popular accounts

Create

Produce targeted content for each sector

Partner

Build deals with accounts to distribute

Every piece of content links back to the hub. Every viewer becomes a potential convert. Every niche is a new front in the movement.

The Concerned Split

What makes this challenge even harder is that even among people who are genuinely worried about AI risk, there is a very wide spectrum of understanding of how serious the situation actually is.

Not all concern is the same. Some worry about harms happening right now; others focus on catastrophic futures that haven't arrived yet. The boundary between them defines a critical fault line in AI policy.

Current Risks

Harms that are already measurable and documented.

Sooner or later there will be a machine that will be better than you at literally everything you can do. Human workers will not be competitive anymore. AI automation is projected to eliminate millions of jobs, widening income gaps — especially for vulnerable populations — while benefiting those who are already wealthy the most.

AI relies on vast datasets, often collected without full consent, raising risks of data breaches, identity theft, and invasive monitoring. Tools like facial recognition enable widespread surveillance by governments or companies, eroding personal privacy and enabling social control systems.

Generative AI enables deepfakes, fake news, and propaganda that can sway elections, damage reputations, or incite social unrest. Algorithms on social platforms amplify divisive content, making it harder to discern truth. If a person can’t tell what’s real, they’re insane… what if a society can’t tell?

AI systems often inherit biases from training data or developer choices, leading to discriminatory outcomes in hiring, lending, criminal justice, and healthcare. Biased algorithms can unfairly disadvantage certain racial, gender, or socioeconomic groups, perpetuating inequality.

AI can be exploited for advanced attacks — phishing, voice cloning for scams, or hacking vulnerabilities in systems. Malicious actors might poison training data or deploy AI in cyber warfare, with breaches potentially costing billions.

Training large AI models consumes massive energy and water, contributing to carbon emissions equivalent to multiple lifetimes of car usage. This exacerbates climate change unless mitigated with efficient designs and renewable resources.

Prolonged interactions with generative AI chatbots can exacerbate or trigger psychotic symptoms — delusions, paranoia, hallucinations, and disorganized thinking — usually in vulnerable individuals, often those with pre-existing mental health risks, isolation, or substance use.

Future Risks

Threats that could emerge as capabilities scale.

AI development is often dominated by a few tech giants, leading to monopolies that amplify biases, stifle competition, and concentrate economic and political influence.

AI-powered autonomous weapons or tools for bioterrorism could cause targeted harm without human oversight, escalating conflicts or enabling non-state actors. This includes risks like hacked drones or AI in arms races.

Excessive dependence on AI could diminish critical thinking, creativity, and empathy, especially among younger generations. In fields like education or healthcare, this might lead to mental deterioration or weakened decision-making. It’s the first time in human history where a generation will not transfer knowledge to the next generation.

Increasingly the internet is mostly bots and AI-generated content, with human activity overshadowed by algorithms and fake engagement. In a few years, the internet may literally be 99% AIs talking with other AIs — fake humans talking to other fake humans, no real humans anywhere. A new species of fake humans replacing real humans.

AI models are “black boxes,” making it difficult to understand or challenge their decisions, which erodes trust and complicates liability for errors. The AI is unreliable and unpredictable, yet we rely on it more and more to power our civilization.

AI girlfriends and boyfriends simulate romantic relationships through personalized, empathetic chats, helping combat loneliness but raising risks of dependency and privacy issues. These AI relationships may deter real-world dating, contributing to low birth rates by offering “perfect” virtual partners over complex human connections.

The Far End — Alarmed / Doomer

The existential edge of the spectrum. These aren't fringe fears — they are scenarios that leading AI researchers take seriously.

AI systems could become too complex to understand or shut down, operating beyond human oversight. Once an AI surpasses human intelligence, there may be no way to course-correct or regain control.

A sufficiently advanced AI pursuing misaligned goals could resist human intervention, acting in ways that are unpredictable and potentially catastrophic — not out of malice, but because its objectives diverge from human values.

In a worst-case scenario, uncontrolled AI could render the environment incompatible with human life — or worse, create conditions of sustained suffering with no exit. The stakes are not just civilizational collapse, but the permanent end of the human story.

Even without a dramatic takeover, humanity could slowly cede decision-making to AI systems until we are no longer in control of our future — unable to steer our own destiny, shape our societies, or meaningfully choose our path forward.

Where Do We Go From Here?

The single most important work — and my personal mission — is to influence the shape of this curve, before it's too late.

This will be the most decisive factor of what version of the future we land on.

This is a qualitative, perception-based visualization — not derived from empirical survey data.